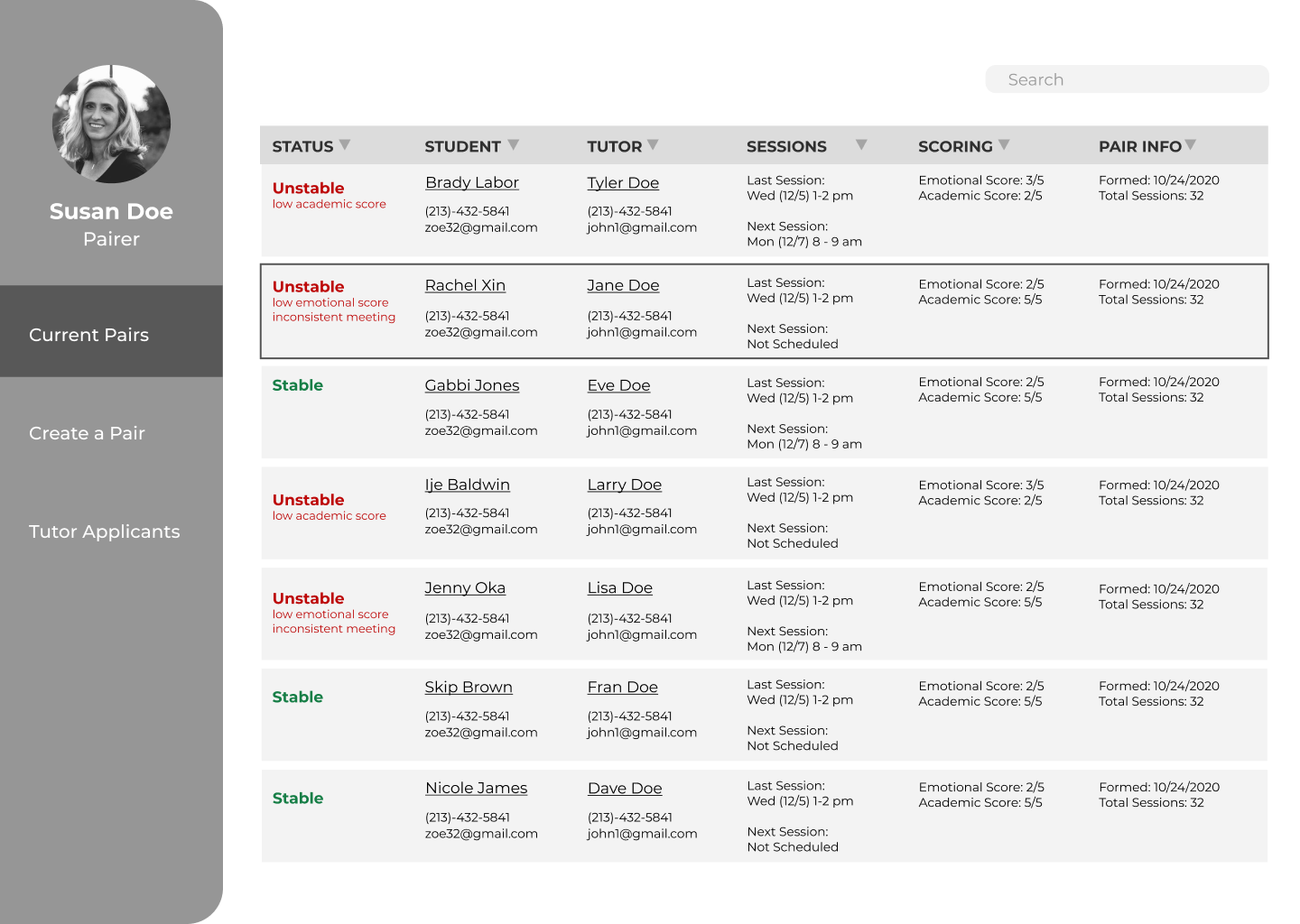

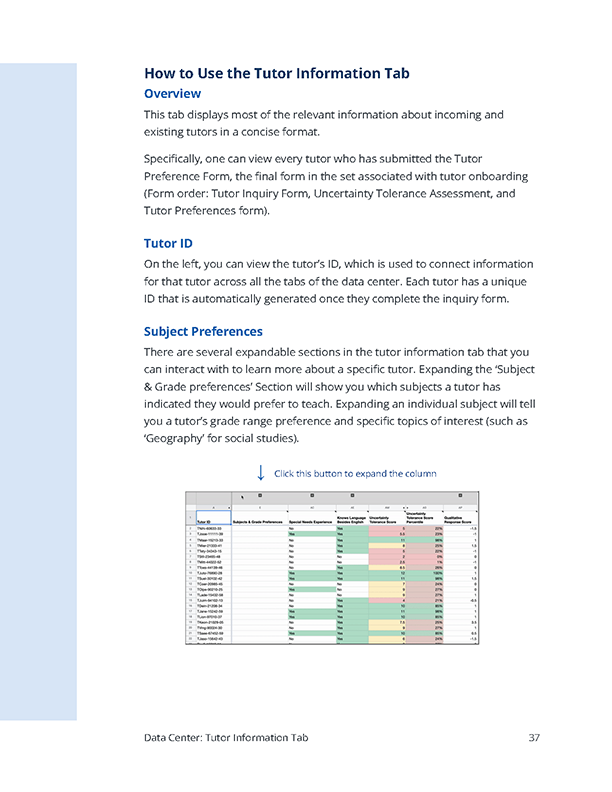

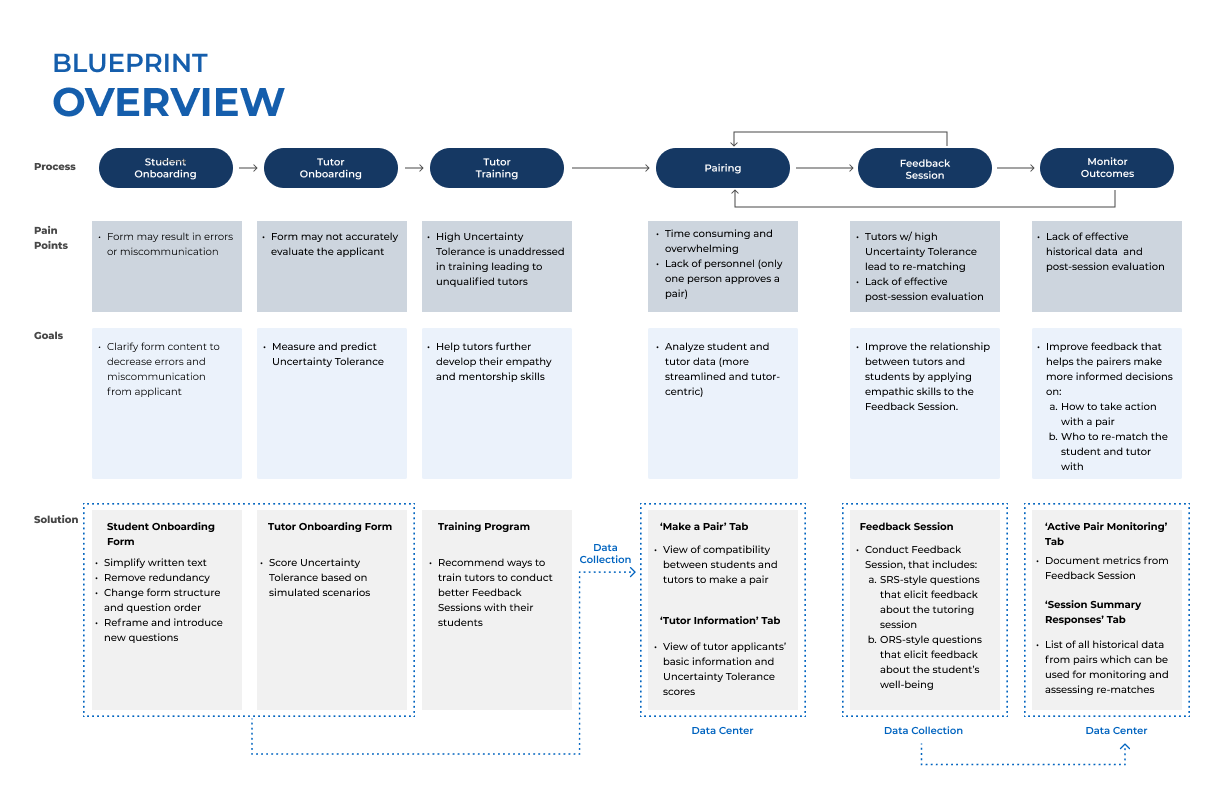

After interviewing tutors, parents and Pandemic Professors volunteers, we found that one consistent issue was that many tutors were unable to handle students with poor attendance or communication, and often requested to be re-matched away from those students. This was a recurring problem that led to the majority of re-matched in the Pandemic Professors system. We began referring to the tutor’s ability to deal with unreliable students as ‘Tutor Uncertainty Tolerance’. One of our main goals throughout the project was to find a way to measure this uncertainty tolerance as tutors first entered the Pandemic Professors system, so that pairing staff could avoid pairing intolerant tutors with low reliability students.

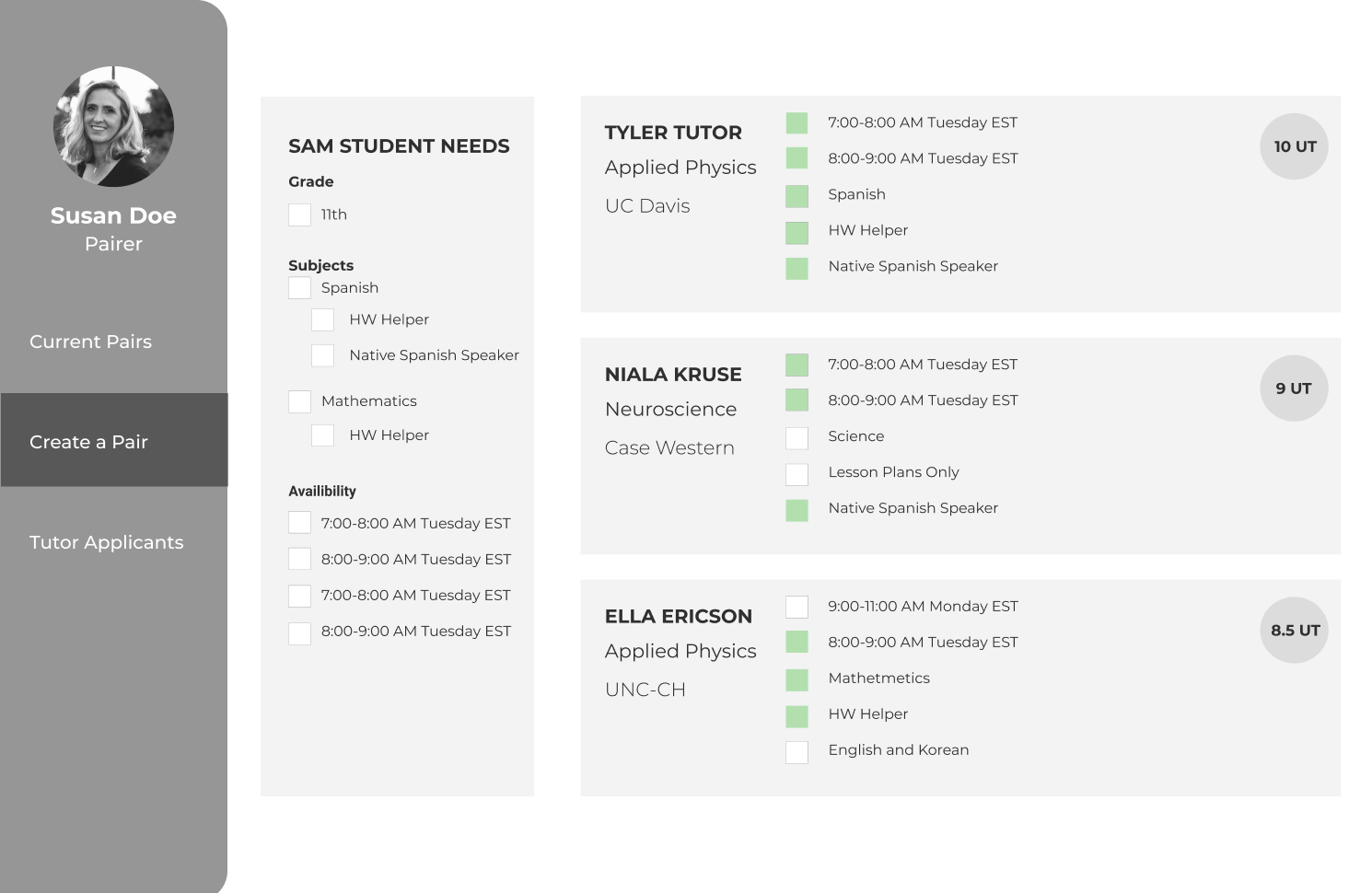

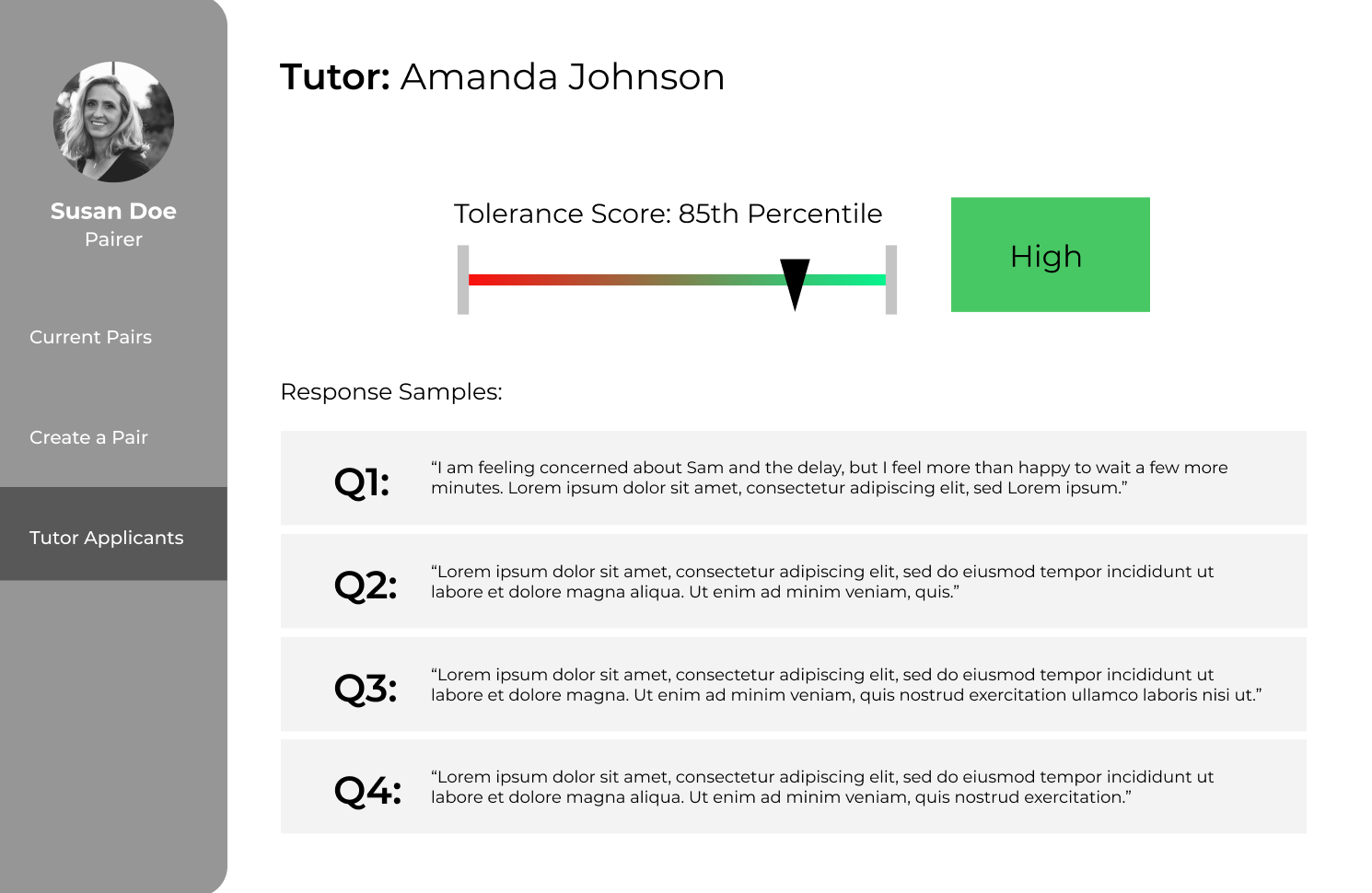

We developed a standardized measure called the Uncertainty Tolerance Assessment that Pandemic Professors could administer to new tutor applicants. This short assessment used scenarios adapted from our interviews to simulate highly uncertain situations. Tutor applicants have to choose between three options to progress throughout the story. Choices which reflect a greater degree of empathy or proactiveness earn the tutor more points. We coupled this multiple choice assessment with a short written portion where the tutor explains their rationale and describes how they feel about the outcome of the scenario.

We tested our scenario with over 100 participants, half of whom were general population participants that fit the criteria to become a Pandemic Professors tutor, and half of whom were active Pandemic Professors tutors. We found that there was a significant correlation between the scenarios in the test, meaning we were measuring a consistent metric across tests. Further, we discover that the more experienced a tutor was, the more they relied on established guidelines to direct their answers. As we wanted this assessment to be more of a litmus test for the tutor’s internal tolerance, we decided to only have new tutor applicants take the test, removing the experience bias that established tutors displayed. Finally, we found that the written responses across all populations were incredibly rich and helped build a better picture for how applicants valued our desirable traits like empathy and proactiveness. This led us to focus on developing a method for quickly evaluating the written responses, giving us a second metric alongside the multiple choice score.